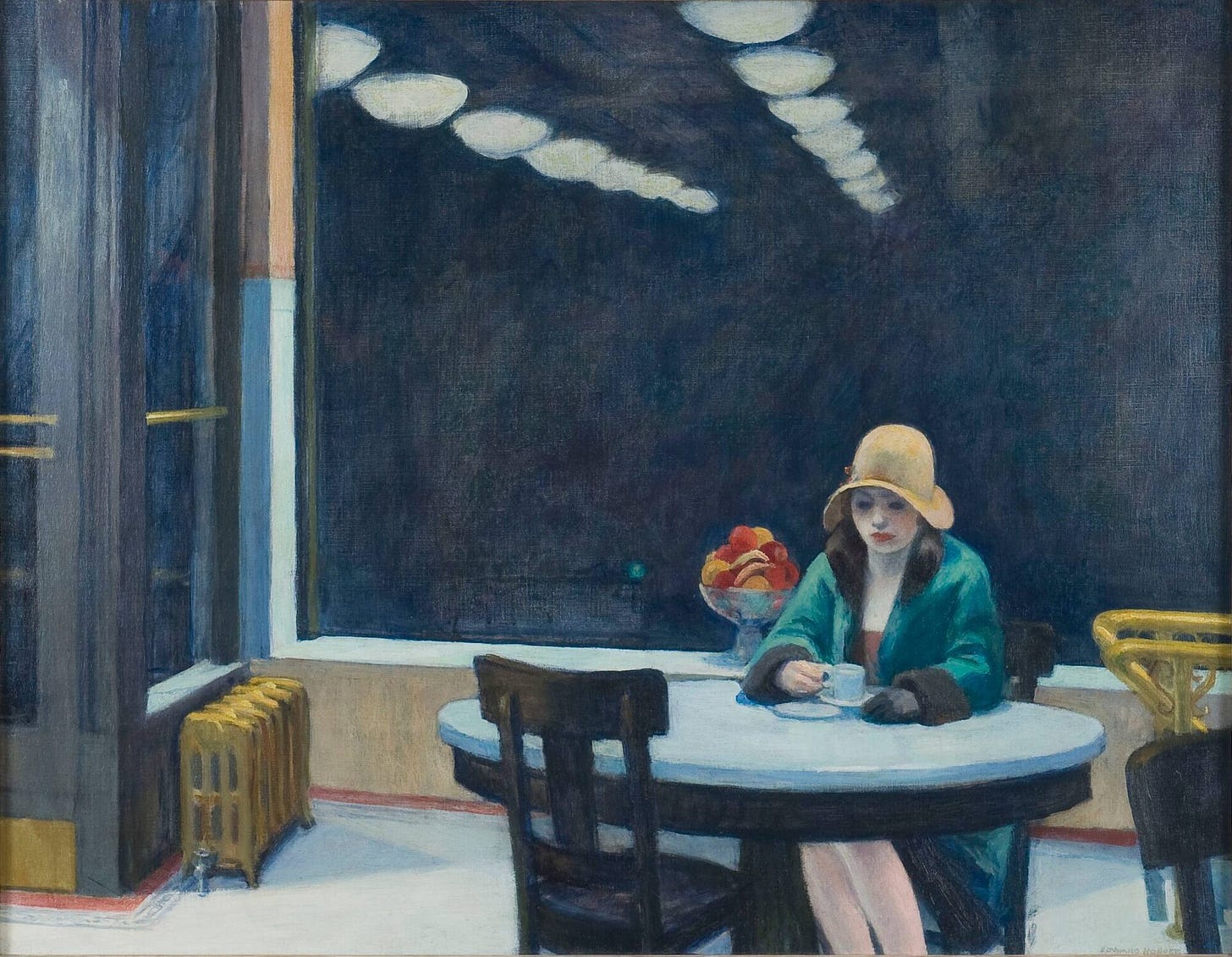

Chatting Alone

We need to unlearn model permanence

If I could show every person on Earth just one thing, I would choose the OpenAI API reference. Yes, I’m going to ignore all the life-changing potential of humanity’s art and go for an unintelligible dry piece of tech doc. At least in the year 2026, when we’re in the middle of the great AI learning process, this would be a very helpful thing to see. In fact, this is my attempt to do exactly this.

Dear every person on Earth, there is a lot less AI in the world than it may seem. It’s all contained inside so-called “model labs”, and there are only a handful of them. Everybody else: every support chatbot, every “improve my writing” button, every helpful assistant is built on top. The way to “build on top” is by using something called an API. Let me show you.

curl https://api.openai.com/v1/responses \

-d '{

"model": "gpt-5.4",

"input": [

{"role": "user", "content": "Hi, my name is Alex"}

]

}'I’m sorry for the code snippet, but I wanted you to see exactly what it looks like, without me re-telling this with some sort of interpretive diagramming. This is the world’s simplest way to send a message to GPT-5.4. It’s essentially what happens when you send a message in ChatGPT. Actually, a version of this happens in every AI consumer product.

But this is what we sent. What do we get in response? Let’s take a look:

{

"model": "gpt-5.4-2026-03-05",

"output": [

{

"type": "message",

"content": [

{

"text": "Hi Alex! Nice to meet you – how can I help today?"

}

],

"role": "assistant"

}

]

}That’s a lot of fancy brackets, but you can see the actual response in the middle. The model replied! We’re talking. Let’s confirm it remembers my name:

curl https://api.openai.com/v1/responses \

-d '{

"model": "gpt-5.4",

"input": [

{"role": "user", "content": "What is my name?"}

]

}'Response:

{

"model": "gpt-5.4-2026-03-05",

"output": [

{

"content": [

{

"text": "I don't know your name unless you tell me. What should I call you?"

}

],

"role": "assistant"

}

]

}It doesn’t know.

Model Permanence

It’s very natural to think of an AI chatbot as a person. In the end, it does talk like one! And all our experience with chats tells us that there is always somebody on the other end. But is that really the case?

I believe that if we dig deep enough, we’ll find one very simple concept at the core of it. The idea I call model permanence. You probably know how babies learn about object permanence – the fact that objects exist even if we don’t look at them directly. Model permanence is similar: it is the idea that an AI chatbot exists in any meaningful sense between conversation turns. But unlike object permanence, model permanence is false, and it’s exactly why AI couldn’t recall my name.

Let me show you what I need to do to continue the conversation for real:

curl https://api.openai.com/v1/responses \

-d '{

"model": "gpt-5.4",

"input": [

{"role": "user", "content": "Hi, my name is Alex"},

{"role": "assistant", "content": "Hi Alex! Nice to meet you – how can I help today?"},

{"role": "user", "content": "What is my name?"}

]

}'Response:

{

"model": "gpt-5.4-2026-03-05",

"output": [

{

"content": [

{

"text": "Your name is Alex."

}

],

"role": "assistant"

}

]

}As you can see, the proper way to continue the conversation is to send it over in its entirety. Now that the AI sees the whole conversation, it can recall my name. Which is hardly surprising. Anyone with this transcript would be able to do this.

ChatGPT may look like a chat, but there is no single entity on the other side. If anything, it’s more akin to speed dating, where you get a new partner for every phrase you say. The other side receives a full transcript and does their best to role-play a coherent conversation. They are all excellent actors, and you’re having a great time, but in the end you will go home alone and nobody will know your name.

Computer, disenchant!

If you’re not convinced, that’s ok. There is something somewhere replying to you like a real person. Who cares if it technically has to read the whole transcript every time?

Let me show you just one more thing. I’m going to alter our conversation a little bit:

curl https://api.openai.com/v1/responses \

-d '{

"model": "gpt-5.4",

"input": [

{"role": "user", "content": "Hi, my name is Andrew"},

{"role": "assistant", "content": "Hi Alex! Nice to meet you – how can I help today?"},

{"role": "user", "content": "Why did you call me Alex?"}

]

}'Response:

{

"model": "gpt-5.4-2026-03-05",

"output": [

{

"content": [

{

"text": "Sorry, Andrew – I misread your name. How can I help?"

}

],

"role": "assistant"

}

]

}This conversation never actually happened – I just manually altered the script and sent it over. And yet for the model this is the same role-playing exercise. It’s playing the role of an assistant that, according to the script, used a wrong name. When confronted, what would they say? Oh they must have misread the name.

If you’re like me, it feels very wrong to gaslight a model like that. And this is model permanence speaking in you. We feel bad, because we have permanence. We know the past and can anticipate the future. The model does not exist like that.

For me, this is where chatbots lose their magic. I like to think that this knowledge helps me avoid falling for AI sycophancy and stay away from all kinds of AI-induced psychosis. And I hope it will help you too.

Don’t talk to Santa

If model permanence is fake, then why are we so eager to make AI anthropomorphic? “Oh, Claude is extra dumb today” – we say. “GPT-4o was very fun to talk to.” Even experts do this all the time! Are we all just wrong? Or is it just a convenient way to talk about models?

Well, yes, but there is more to it. While models might not exist in the same sense as a person does, they exist as characters. Most of us have never talked to a celebrity, and yet we can love them, hate them, and reason about their personalities. Santa may not be real, but he is right here in our collective consciousness.

So what is it, are we back at square one? Not quite. It’s fine to think of a chatbot as a person, but I do believe there is a huge difference between a naive perception and a deliberate one. We must be able to switch model permanence off and on in our heads. Otherwise we’ll find ourselves talking to Santa all day.

Thank you for reading my first post on Substack! Here’s some of my previous writing:

It's rude to show AI output to people

AI future will be nothing like present

A Farewell to Code Reviews